Barndoor.ai

CASE STUDY

Venn: How Moda Launched a New AI Product for Barndoor in 6 Months

Published · By Moda Labs

Highlights

- 5x MCP integrations with multiple MCPs shipped per week

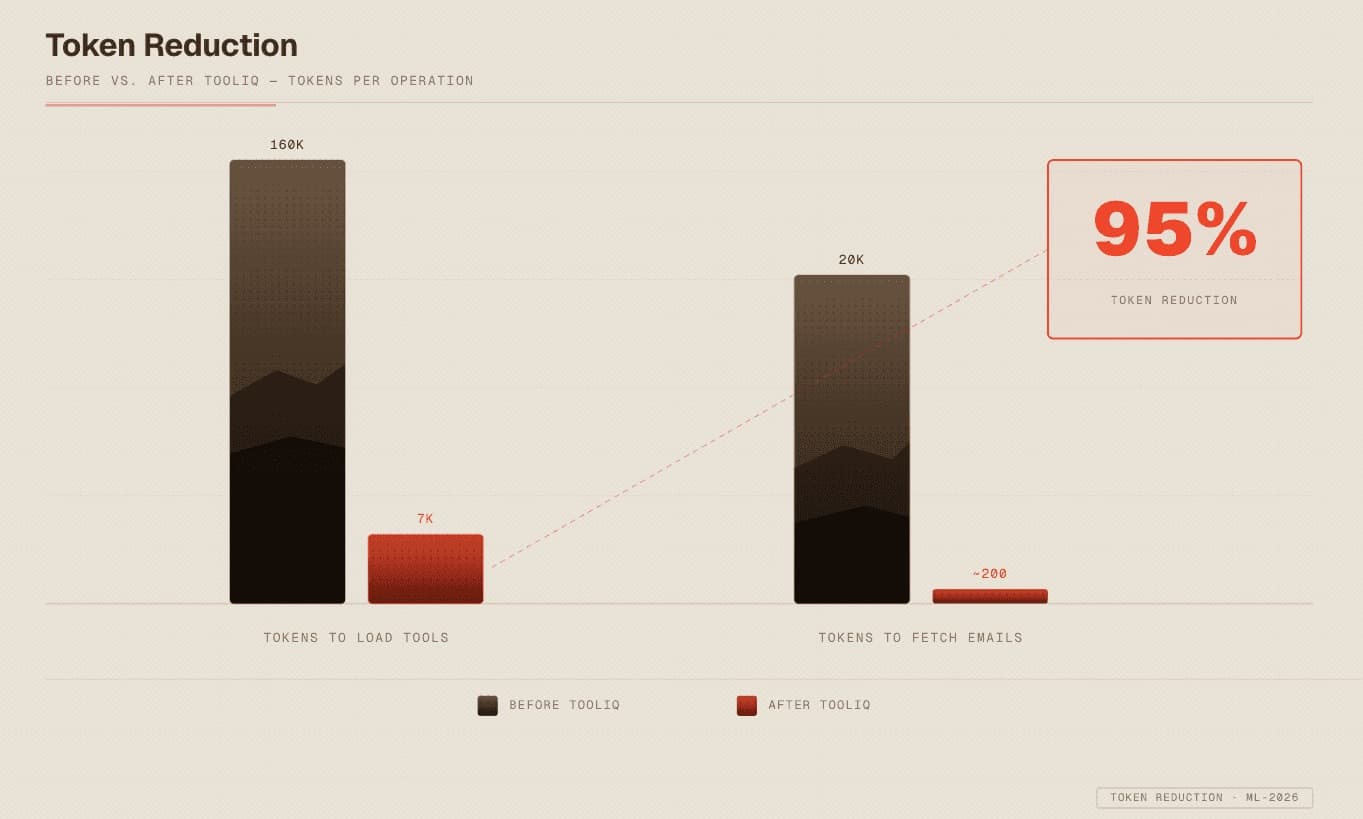

- 95% token reduction when using MCPs with AI agents

- Venn: delivered a self-service commercial product

What Was Barndoor’s Challenge?

Barndoor helps enterprises use AI safely. They provide governance policies and access controls for AI agents connecting to third-party services like Salesforce, Slack, and Google Drive.

In mid-2025, they hit a classic chicken-and-egg problem. AI governance only matters if there’s something to govern. But the MCP ecosystem was thin, and Barndoor had only a few prototypes. Customers kept asking the same question: “What exactly am I governing?” MCP was still new and Barndoor knew they needed to lead, not follow.

They had a core engineering team but weren’t ready to hire another squad of AI engineers. Hiring is slow and expensive, and they needed momentum they could trust.

The Solution

Moda Labs came in as a second execution team, embedded with Barndoor rather than operating at arm’s length. With hands-on founders (a PM and an engineer) plus four engineers across frontend and backend, Moda delivered a shovel-ready team already fluent in AI.

Phase 1 · Aug–Sept 2025

MCP Factory

The first priority was scaling the integration library.

We built a repeatable system for spinning up MCPs: code patterns that leverage OpenAPI specs, a testing framework that catches problems early, and support for remote-hosted MCPs like Notion’s official server.

Within weeks, we were shipping 2-3 new integrations per week. By late September, Barndoor’s library had grown 5x and continued to scale into 2026.

Phase 2 · Oct–Nov 2025

ToolIQ

Scaling the library exposed a deeper problem with context limitations.

Through dogfooding, we found that loading just 2-3 MCP servers would fill the entire 200k token context window. Pulling 3-5 emails in a single response caused the same overflow. This wasn’t a known issue yet — Anthropic wouldn’t publish their progressive disclosure approach until November.

So we built the fix:

Single entry point for all MCPs. Rather than configuring MCP servers fresh with every chat session, we created a tool router that lets users configure once and use everywhere, giving access to all their MCPs from a single place.

Progressive disclosure for tools. Instead of loading every tool definition upfront, we search by name first (minimal tokens), then describe only the tools the agent needs. Token usage dropped from 160k to 7k in preliminary tests.

Code execution for responses. Raw API responses are bloated with formatting. We run code to parse and strip responses before anything hits the context window. Loading emails went from consuming 20k tokens to a few hundred.

We called it ToolIQ and shipped it in December, with an eval system to test across all the MCP servers.

Phase 3 · Dec 2025–Feb 2026

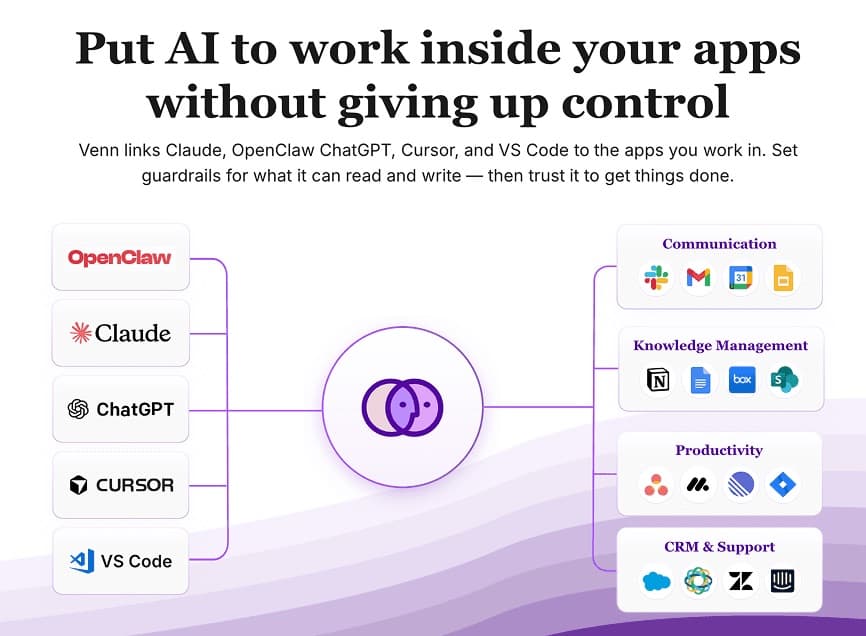

Venn

In November, three months into the engagement, Barndoor needed to quickly productize their MCP work. Instead of moving slowly with hiring and recruiting, they chose to work with Moda, a team that quickly earned their trust with consistent execution and strong expertise.

They extended the contract. We spent three more months turning the infrastructure into Venn: a self-service product targeted towards end users that launched in February 2026.

What started as platform acceleration had become a new revenue stream.

Before Moda Labs:

- A few MCP integrations prototypes

- Engineering team stretched between core product and MCP library

- Barndoor needed to deliver on a broader integration story

After Moda Labs:

- 5x MCP integrations with several new MCPs shipping per week

- 95% reduction in token usage across tool use

- New commercial product launched February 2026

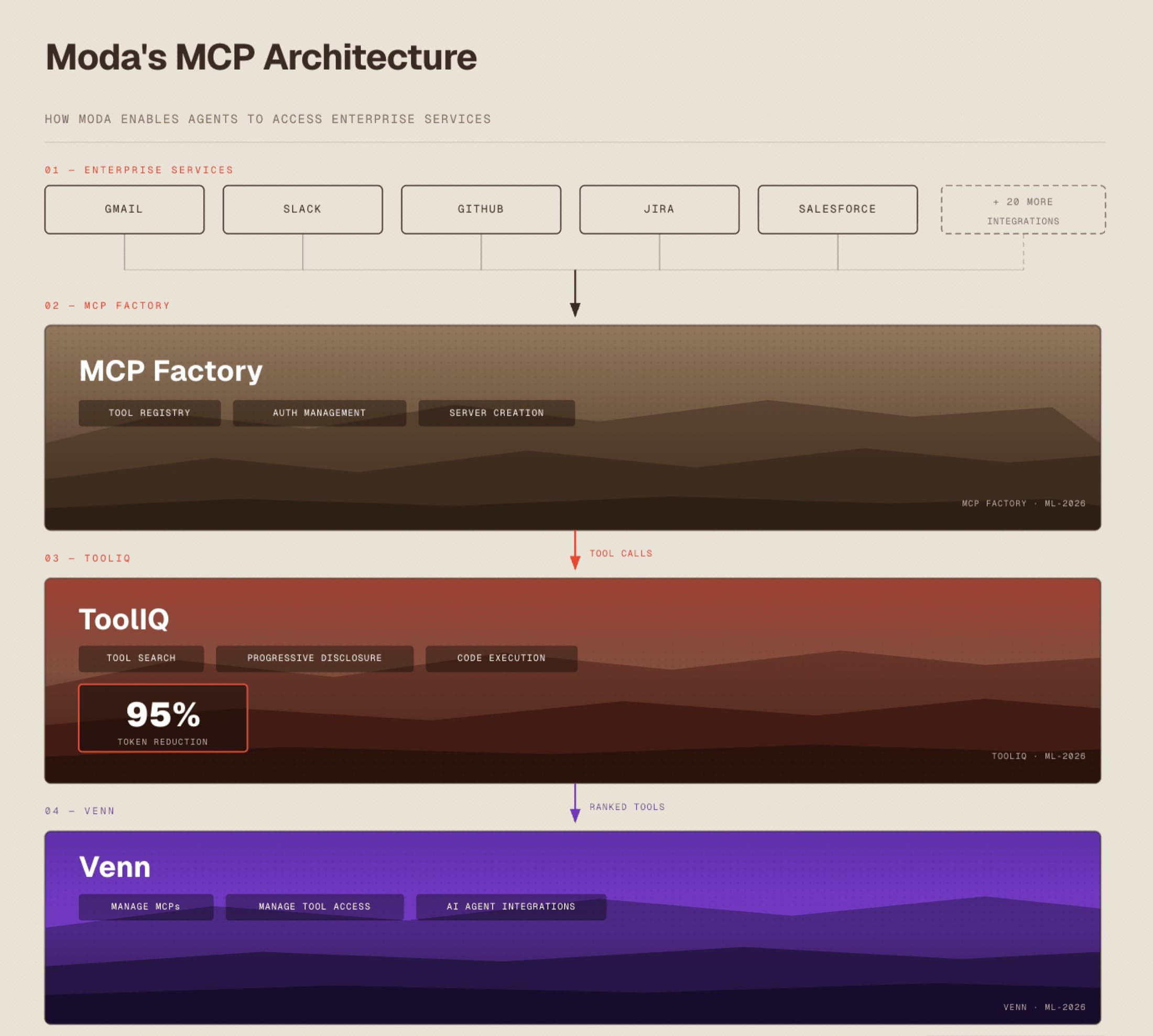

ARCHITECTURE

Looking Under the Hood

Project Setup

- Team

- Moda Labs founders (Product, Engineering), 2 onshore, 2 offshore engineers

- MCP System

- Agentic MCP builds from OpenAPI specs that handle OAuth and API key authentication, and automate tool management

- Models

- Claude and ChatGPT for agent orchestration and tool calling; Claude Code for agentic development workflows

- Tool Router

- Common routing gateway with progressive disclosure and code execution

- Eval System

- Automated agent evaluation pipeline built on LangGraph, OpenTelemetry, and Arize

The system has three layers:

- 01

MCP Factory: Generates tool definitions from OpenAPI specs, packages them as MCP servers, and runs automated tests for quality

- 02

Tool Router (ToolIQ): Sits between the agent and MCP servers. Handles tool search, progressive disclosure, and response parsing. The agent only sees what's relevant to the current task.

- 03

Venn: Web application where users configure their MCPs, connect to their services, and manage access controls in one place.

Why This Worked

AI depth, not just engineering capacity. Barndoor’s team had engineers, but they were stretched thin. They needed a trusted partner who could spot problems before customers hit them and build solutions the market hadn’t seen yet. Moda Labs brought a full squad with the intuition to move at the frontier of what’s possible.

What’s Next

Barndoor now has two levers: their core governance platform and Venn, both running on a single stable foundation. Moda Labs continues the partnership, building skills and memory into ToolIQ while maintaining the MCP factory. The engagement started as platform acceleration, then became a new revenue stream.